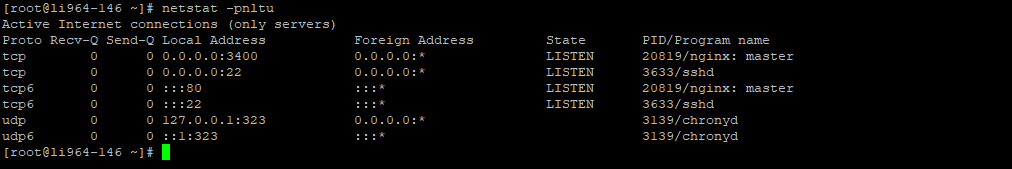

With the switch to port 8080 we were able to identify this pitfall and improved the NGINX config to match our desired result: # disable absolute_redirectĪnother curl test resulted in a correct redirect handling: curl -IL # is doing a 301 as expected and adding the missing trailing slashįinally, everything was running as expected.Is it possible to perform a proxy_pass with nginx without having a DNS? EC2 server) in front of this site were covering for that misconfiguration. So this wasn't running as expected from day one, but didn't come up earlier as other services (e.g. Additionally we needed to ensure port changes on redirects weren't applied by setting absolute_redirect off (this eliminates the 8080 port being added to a redirected URL). Learning: $http_host includes the port number (if present in request), $host doesn't. Some research later we were able to fix our redirect error by updating $http_host with $host. Location: # the next request applies the https again!? # but why did the 'https' go missing, while the trailing slash has been added? # do a request with a missing trailing slash and some URL parametersĬurl -IL # results as expected in a 301, which comes from the rewrite permanent notation We analyzed the redirect behaviour through some curl commands and were able to identify the root cause of the endless redirects: $http_host within a NGINX config isn't doing what I thought it would do. The non reachable URLs included that port change as well, as they resulted in for example. We switched from port 80 to 8080 within the NGINX and Kubernetes service configuration. We introduced one major change with the update that we were also easily recognizing within the not reachable URLs: - listen 80 So we rolled back and had a deeper look on our recently added changes again. We also recognized that some requests were trapped in an endless redirect loop. A quick check showed us that some URLs were running into a "This site can't be reached" error. A few hours later customers and our support team got back to us and reported that they weren't able to access some of our web pages. This resulted in a build pipeline speed up by ~30%, awesome!Īfter testing and merging this improvement to production I was confident and really happy: everything was running as expected.īut it wasn't this time. With the help of one of our Platform Engineers (Thanks René! □) we found a nice solution that allowed us to switch from four to three docker images, while still being able to satisfy all needed testing and preview environments. Additionally we used that time to improve some CI configurations that would also increase the security aspect of maintaining needed environment varialbes. We knew that we could switch to a different build pipeline approach for each needed environment by the help of some ConfigMap environment variables. Some days ago we wanted to improve our continuous integration (CI) workflow to decrease our pipeline runtimes and also the footprint that comes with it. After merging that update and testing all common redirect scenarios again within the platform on a testing environment, I was sure: everything is running as expected. In the test above you can see my main goal of getting the https and a missing trailing slash / applied correctly. redirects to (if Accept-Language field in HTTP header includes en).I tested all kinds of incoming request use cases of that NGINX configuration while runnnig the docker container locally and was totally confident that everything would be redirected as expected. To get the new URL structure applied to all incoming requests I created the following redirect rule, which knows about $language_suffix through this accept language configuration, within a custom NGINX conf file: rewrite ^/$ permanent Besides implementing language redirects for german and english browser languages, we had to create and maintain a lot of outdated page redirects so that we don't miss out on old URL traffic and won't lose any potential customers. This allowed us to be more flexible with any upcoming requests, but it also opened the door for new challenges and pitfalls. While working on the relaunch of tomorrow.one early last year, we moved the whole web tech stack to Kubernetes and switched to a project based dockerized NGINX setup for our web frontends.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed